49 lines

1.9 KiB

Markdown

49 lines

1.9 KiB

Markdown

<div align="center">

|

|

|

|

# 🐾 Tabby

|

|

|

|

[](https://opensource.org/licenses/Apache-2.0)

|

|

[](https://github.com/psf/black)

|

|

|

|

|

|

|

|

|

|

</div>

|

|

|

|

> **Warning**

|

|

> Tabby is still in the alpha phrase

|

|

|

|

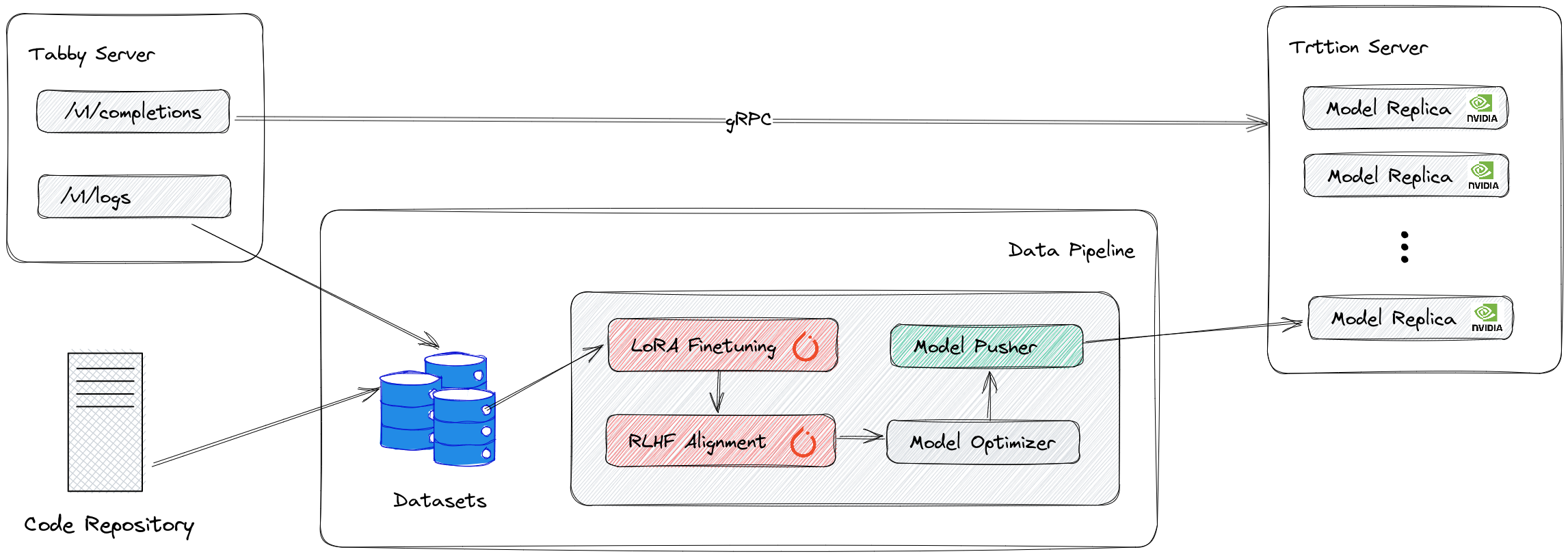

An opensource / on-prem alternative to GitHub Copilot.

|

|

|

|

## Features

|

|

|

|

* Self-contained, with no need for a DBMS or cloud service

|

|

* Web UI for visualizing and configuration models and MLOps.

|

|

* OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE).

|

|

* Consumer level GPU supports (FP-16 weight loading with various optimization).

|

|

|

|

## Get started

|

|

The easiest way of getting started is using the `deployment/docker-compose.yml`:

|

|

```bash

|

|

docker-compose up

|

|

```

|

|

Note: To use GPUs, you need to install the [NVIDIA Container Toolkit](https://docs.nvidia.com/datacenter/cloud-native/container-toolkit/install-guide.html). We also recommend using NVIDIA drivers with CUDA version 11.8 or higher.

|

|

|

|

You can then query the server using `/v1/completions` endpoint:

|

|

```bash

|

|

curl -X POST http://localhost:5000/v1/completions -H 'Content-Type: application/json' --data '{

|

|

"prompt": "def binarySearch(arr, left, right, x):\n mid = (left +"

|

|

}'

|

|

```

|

|

|

|

We also provides an interactive playground in admin panel [localhost:8501](http://localhost:8501)

|

|

|

|

|

|

|

|

## TODOs

|

|

|

|

* [ ] Fine-tuning models on private code repository.

|

|

* [ ] Plot metrics in admin panel (e.g acceptance rate).

|

|

* [ ] Production ready (Open Telemetry, Prometheus metrics).

|

|

* [ ] Token streaming using Server-Sent Events (SSE)

|